Impact statement: CVE-2026-2652 is a newly published MLflow authentication-bypass issue affecting MLflow versions before 3.10.0. The concern is not a normal website bug. It matters because MLflow often sits near model artifacts, experiment data, tokens, notebooks, build systems, containers, and internal automation. If an MLflow server is reachable from an untrusted network, admins should update, restrict access, and review recent activity.

MLflow is common in AI, data science, and MLOps environments. It may be installed directly on a VM, inside Docker, behind Nginx or Apache, in a Kubernetes namespace, or on a shared research server. Even when teams believe authentication is enabled, this CVE describes a deployment mode where some server functions may not receive the intended access checks.

Who should check this

- Teams running MLflow servers for experiment tracking, model registry, model serving, or internal MLOps workflows.

- Hosting, DevOps, and MSP teams that expose MLflow through a reverse proxy, VPN, zero-trust tunnel, Kubernetes ingress, or internal developer portal.

- Data-science teams that store secrets, model artifacts, training outputs, customer datasets, or cloud credentials near MLflow workloads.

| Component | Affected versions | Fixed version | Primary concern |

|---|---|---|---|

| MLflow | Versions before 3.10.0 | 3.10.0 or newer | Authentication checks may not protect all intended server functionality in a specific deployment mode |

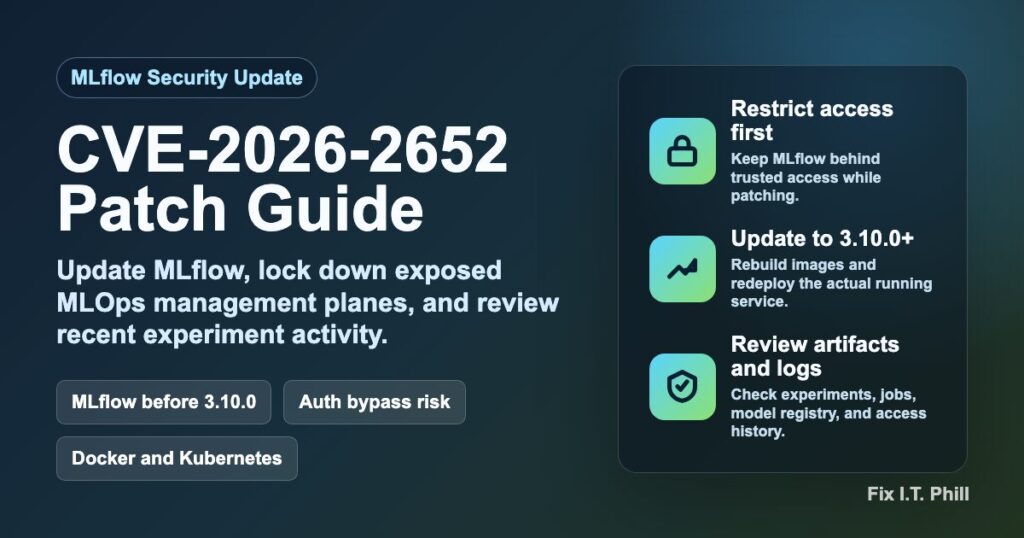

What to do now

Update MLflow to 3.10.0 or newer. If your environment can move to a newer stable release after testing, prefer the newest supported release. Treat public MLflow exposure as risky until the update and access review are complete.

Safe version checks

Use whichever check matches how MLflow is installed in your environment:

python -c "import mlflow; print(mlflow.__version__)"

pip show mlflowFor containers and Kubernetes, check the image tag used by the deployment, not only the version on an admin laptop. If the image builds MLflow during CI, rebuild the image after updating the dependency and redeploy from the rebuilt artifact.

Patch workflow

- Take a backup first. Back up MLflow metadata storage, artifact storage configuration, reverse-proxy configuration, Kubernetes manifests, and any deployment variables needed for rollback.

- Update MLflow. Move to 3.10.0 or newer in the Python environment, container image, package lockfile, or platform image that actually runs the service.

- Restart safely. Restart the MLflow service, container, or Kubernetes deployment during a maintenance window.

- Restrict access. Keep MLflow behind VPN, SSO, private networking, a bastion, or admin IP allowlisting. Do not leave MLflow directly reachable from the public internet.

- Retest normal workflows. Confirm experiment tracking, artifact reads, model registry actions, model-serving handoffs, and team login flows still work.

- Review recent activity. Look for unexpected experiment changes, unexpected job activity, unusual artifact access, new or modified users, abnormal trace data, and unfamiliar source IPs.

If you cannot patch immediately

Temporary mitigation should focus on exposure reduction. Put MLflow behind private access, block direct public traffic, require a trusted access layer in front of the service, and limit who can reach the server. If job or automation features are not needed, disable them according to your internal deployment standard until the patch is installed.

A WAF or CDN can help reduce accidental exposure and add access controls around a public hostname, but it is not the permanent fix. The durable fix is updating MLflow and ensuring the service is not broadly exposed.

What defenders should review

- MLflow server logs and reverse-proxy logs around the disclosure window.

- Experiment, run, model registry, and artifact history for unexpected changes.

- Cloud, database, storage, and source-control credentials that may be reachable from the MLflow host.

- Container and Kubernetes audit logs for unexpected redeploys or image changes.

- Firewall, VPN, SSO, and access-control logs for unusual MLflow access patterns.

Hosting and Kubernetes notes

For Kubernetes, patch in a cluster-safe order: update the image tag or dependency source, apply to staging first, roll the deployment, watch readiness and logs, then repeat in production. Keep the old image tag available until the new deployment has passed workflow tests. For Docker Compose or systemd services, preserve the previous config and service file so rollback is mechanical.

For web-hosting and MSP environments, inventory any MLflow hostname, subdomain, reverse-proxy route, or developer portal entry. If MLflow is not a customer-facing application, it should normally be private. Tell customers plainly: MLflow has a security update, the server should be updated to 3.10.0 or newer, and access should be limited to trusted users and networks.

CDN and WAF handoff

CDN/WAF teams should treat this as an access-control and management-plane exposure review. Prefer allowlisting, VPN/SSO enforcement, or challenge mode for MLflow hostnames while owners patch. Keep any request-level rule engineering private and do not publish tuning details.

Bottom line

If MLflow is reachable from users outside a trusted admin network, update it now and tighten access. AI and data-science tools often hold sensitive operational context, so the cleanup checklist should include credentials, artifacts, model history, automation logs, and container or Kubernetes deployment history.